In recent months there has been a lot of talk about big stuff. Between ‘Big Data’ and calls for a return to ‘Longue durée’ history writing, lots of people seem to be trying to carve out their own small bit of ‘big data’. This post represents a reflection on what feels to me to be an important emerging strategy for information interrogation driven by the arrival of ‘big data’ (a ‘macroscope’); and a tentative step beyond that, to ask what is lost by focusing exclusively on the very large. And the place I need to start is with the emergence of what feels to me like an increasingly commonplace label – a ‘macroscope’ – for a core aspiration of a lot of people working in the Digital Humanities.

As far as I can tell, the term ‘macroscope’ was coined in 1969 by Piers Jacob, and used as the title of his science fiction/fantasy work of the same year – in which the ‘macroscope’, a large crystal, able to focus on any location in space-time with profound clarity, is used to produce something like a telescope of infinite resolution. In other words, a way of viewing the world that encompasses both the minuscule, and the massive. The term was also taken up by Joel de Rosnay and deployed as the title of a provocative book on systems analysis first published in 1979. The label has also had a long and undistinguished afterlife as the trademark for a suite of project management tools – a ‘methodology suite’ – supported by the Fujistu Corporation.

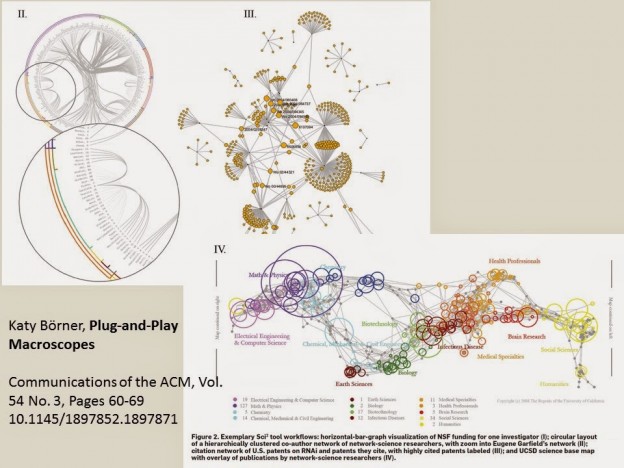

But I think the starting point for interest in the possibility of creating a ‘macroscope’ for the Digital Humanities, comes out of computer science, and the work of Katy Börner from around 2011. Her designs and advocacy for the development of a ‘Plug and Play Macroscope’, seems to have popularised the idea to a wider group of Digital Humanists and developers. To quote Börner:

Read More: Big Data, Small Data and Meaning