In light of word embeddings’ recent popularity, I’ve been playing around with a version called Latent Semantic Analysis (LSA). Admittedly, LSA has fallen out of favor with the rise of neural embeddings like Word2Vec, but there are several virtues to LSA including decades of study by linguists and computer scientists. (For an introduction to LSA for humanists, I highly recommend Ted Underwood’s post “LSA is a marvelous tool, but…“.) In reality, though, this blog post is less about LSA and more about tinkering with it and using it for parts.

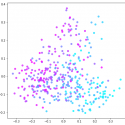

Like other word embeddings, LSA seeks to learn about words’ semantics by way of context. I’ll sidestep discussion of LSA’s specific mechanics by saying that it uses techniques that are closely related to ones commonly used in distant reading. Broadly, LSA constructs something like a document-term matrix (DTM) and then performs something like Principle Component Analysis (PCA) on it.1 (I’ll be using those later in the post.) The art of LSA, however, lies in between these steps.

Typically, after constructing a corpus matrix, LSA involves some kind of weighting of the raw word counts. The most familiar weight scheme is (l1) normalization: sum up the number of words in a document and divide the individual word counts, so that each cell in the matrix represents a word’s relative frequency. This is something distant readers do all the time. However, there is an extensive literature on LSA devoted to alternate weights that improve performance on certain tasks, such as analogies or document retrieval, and on different types of documents.

This is the point that piques my curiosity. Can we use different weightings strategically to capture valuable features of a textual corpus? How might we use a semantic model like LSA in existing distant reading practices? The similarity of LSA to a common technique (i.e. PCA) for pattern finding and featurization in distant reading suggests that we can profitably apply its weight schemes to work that we are already doing.

Read the full post here.